Linux Home Router Traffic Shaping With FQ_CoDel

March 26, 2020 · Benjamin Lee · linux · router · bufferbloat

Background

Bufferbloat refers to excessively large network buffers causing high latency.

Many operating systems are configured by default to enable good performance on low-latency high-bandwidth links, such as data centers and corporate LANs. For example, with Gigabit Ethernet (1 Gbps) 1,500-byte packets can be sent at a rate of approximately 81,274 packets/sec. At this rate, a buffer of 1,000 packets represents a delay of ~12 ms.

1,000,000,000 bits/sec × 1 byte/8 bits × 1 packet/1,538 bytes ≈ 81,274 packets/sec

1,000 packets × 1 sec/81,274 packets × 1,000 ms/sec ≈ 12 ms

However, at low bandwidths, such as the 1 Mbps upload speed offered by my home ISP, a 1,000-packet buffer would represent a massive 12,000 ms delay. Worse still, by the time the tail of the queue is reached, it is unlikely that those packets still need to be delivered, since the users that sent them have already retried or given up.

Traditional QoS (Quality of Service) systems use packet metadata such as port numbers and TCP flags to identify high priority traffic. For example, an administrator can prioritize SIP traffic on port 5060 for VoIP calls or reserve bandwidth for TCP ACKs. However, these systems are complex to administer and are difficult to mantain as applications and requirements change. Additionally, these systems are becoming less relevant due to the increasing amounts of opaque traffic transported over HTTPS and VPNs.

Home internet connections exacerbate the bufferbloat problem due to having asymmetric links with low upload bandwidths in addition to poorly configured modems. Home users also have increasing amounts of data being uploaded, such as photos, video conferencing, and security cameras.

CoDel and FQ_CoDel

CoDel (Controlled Delay) is an Active Queue Management algorithm that drops packets based on delay rather than queue length. FQ_CoDel (Fair Queueing Controlled Delay) combines CoDel with fair queueing to share bandwidth between flows. CoDel was introduced in a 2012 publication, Controlling Queue Delay. The initial Linux implementations of sch_codel.c and sch_fq_codel.c were committed soon after.

Although CoDel is designed to be a "no-knobs" algorithm, tuning is required for low-bandwidth links. For example, at 1 Mbps transmitting a 1,500-byte packet takes ~12 ms, so the CoDel target should be increased from the default 5 ms. Additionally, the Bufferbloat Wiki recommends disabling ECN for uplinks less than 4 Mbps.

Implementation

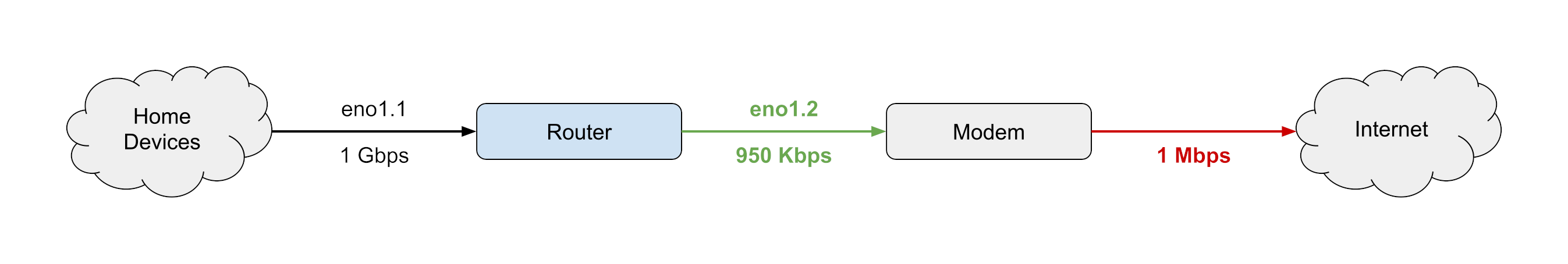

The simplest implementation is to create a single HTB bucket with FQ_CoDel on the "WAN" interface connected to the modem. The rate limit must be lower than the available upload bandwidth (~95%) to ensure that packets are not buffered by the modem, however you will have to experiment to find the right value for your connection.

# tc qdisc add dev eno1.2 root handle 1: htb default 1 # tc class add dev eno1.2 parent 1: classid 1:1 htb rate 950kbit quantum 1514 # tc qdisc add dev eno1.2 parent 1:1 fq_codel target 15ms noecn

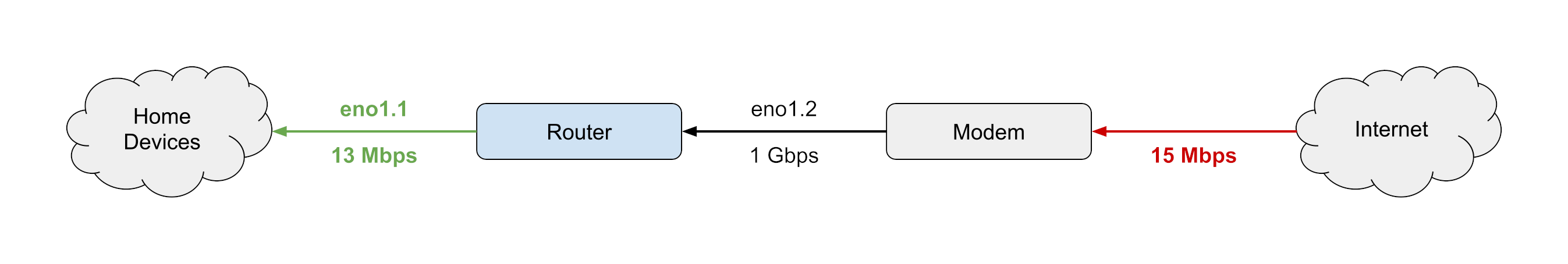

If download shaping is required, you can use a similar configuration on the "LAN" interface connected to your home devices. Download bufferbloat is a complex subject and will be discussed further in a future article. Usually you will have to use a significantly lower rate limit for downloads (~85%). If you have significant download traffic originating from the router, you may want to consider ingress shaping with an ifb device instead.

# tc qdisc add dev eno1.1 root handle 1: htb default 1 # tc class add dev eno1.1 parent 1: classid 1:1 htb rate 13mbit quantum 1514 # tc qdisc add dev eno1.1 parent 1:1 fq_codel

Alternatives

Some users may want to perform additional shaping by creating multiple HTB buckets with different rate limits and classifying packets using nftables, iptables, or tc filters. There are many examples of these kinds of configurations, however I do not think it is necessary for most use cases.

Alternatively, you might want to consider using CAKE, which adds interesting features such as pre-configured priority queues, fair queueing across LAN hosts when using NAT, and ACK filtering.

Testing

One way to test your configuration is to run ping while saturating your bandwidth (e.g. uploading a large file). If you are suffering from bufferbloat, ping will show significant delays and drops. I also recommend the DSLReports speed test and Fast.com speed test because they include tools for measuring latency.

Here is an example of running ping while saturating a 1 Mbps upload connection:

64 bytes from 1.1.1.1: icmp_seq=256 ttl=55 time=2944 ms [dropped packet icmp_seq=257] 64 bytes from 1.1.1.1: icmp_seq=258 ttl=55 time=3109 ms 64 bytes from 1.1.1.1: icmp_seq=259 ttl=55 time=3211 ms 64 bytes from 1.1.1.1: icmp_seq=260 ttl=55 time=3235 ms

Based on the observed 3,000 ms delay and assuming a FIFO queue we can estimate that the modem transmit queue length is ~250 packets.

After implementing traffic shaping with FQ_CoDel, ping shows an improvement:

64 bytes from 1.1.1.1: icmp_seq=306 ttl=55 time=27.3 ms 64 bytes from 1.1.1.1: icmp_seq=307 ttl=55 time=25.9 ms 64 bytes from 1.1.1.1: icmp_seq=308 ttl=55 time=28.8 ms 64 bytes from 1.1.1.1: icmp_seq=309 ttl=55 time=33.1 ms

Much better!

Additional Reading

- b1c1l1 blog: Linux Home Router Download Bufferbloat Analysis

- RFC 8290: The Flow Queue CoDel Packet Scheduler and Active Queue Management Algorithm

- ACM Queue: Controlling Queue Delay

- LWN.net: The CoDel queue management algorithm

- Bufferbloat Wiki: Best Practices for Benchmarking CoDel and FQ CoDel

- Debian Manpages: tc-fq_codel(8)