Linux Home Router Download Bufferbloat Analysis

April 19, 2020 · Benjamin Lee · linux · router · bufferbloat

Introduction

In my previous article, Linux Home Router Traffic Shaping With FQ_CoDel, I mentioned that download shaping with rate limiting can avoid download bufferbloat. In this article, I wanted to take a closer look at the factors that contribute to download bufferbloat and how rate limiting works around the problem.

Background

Transmission Control Protocol (TCP) uses congestion control algorithms to adapt to network conditions. TCP senders increase the window size to use all available bandwidth until congestion is detected. However, detecting remote congestion is difficult in practice and the techniques for doing so are continuing to evolve.

Traditionally, TCP congestion control algorithms (e.g. Reno, CUBIC) assumed that congestion causes packet loss. Bufferbloat breaks this assumption by queueing packets, rather than dropping them, during periods of congestion. Despite being delayed, these packets are eventually delivered and ACKed, preventing senders from detecting and adapting to the congestion.

Some TCP congestion control algorithms (e.g. Vegas, BBR) can detect congestion by measuring delay. However, some concerns have been raised regarding how bandwidth is shared between flows using loss-based algorithms and flows using delay-based algorithms. Google created and uses TCP BBR in production.

Explicit Congestion Notification (ECN) extends IP and TCP by enabling congested network nodes to signal congestion explicitly by marking packets rather than dropping them. However, ECN adoption has been slow due to misbehaving devices dropping packets with ECN bits set. Most servers now support ECN for clients that request it, but most clients still do not enable ECN by default. FQ_CoDel enables ECN marking by default.

Download Bufferbloat

Download bufferbloat is difficult to analyze because it is highly variable and depends on many factors outside of our control. These factors include, but are not limited to: TCP congestion control algorithm, ECN support, round-trip time (RTT), traffic shaping, network congestion.

In particular, TCP congestion control algorithms run on the sender, which in the case of downloads is the remote server. If the server is configured to use a delay-based congestion control algorithm such as BBR, which is designed to reduce latency despite the presence of bufferbloat, then it will be especially difficult to measure and reproduce bufferbloat-related problems.

Rate Limiting

One workaround for download bufferbloat is rate limiting. Depending on how it is implemented this may also be called ingress shaping. An example implementation is available in my previous article.

Using rate limiting to avoid download bufferbloat is counterintuitive because it involves dropping valid packets that will need to be retransmitted. It also seems inefficient to sacrifice a significant amount of download bandwidth and leave the link underutilized.

It's worth reiterating that rate limiting is a workaround. When senders send us too much data, our options for asking them to slow down are limited. Although some senders might respond to delay and/or ECN, nearly all senders will respond to packets dropped due to rate limiting.

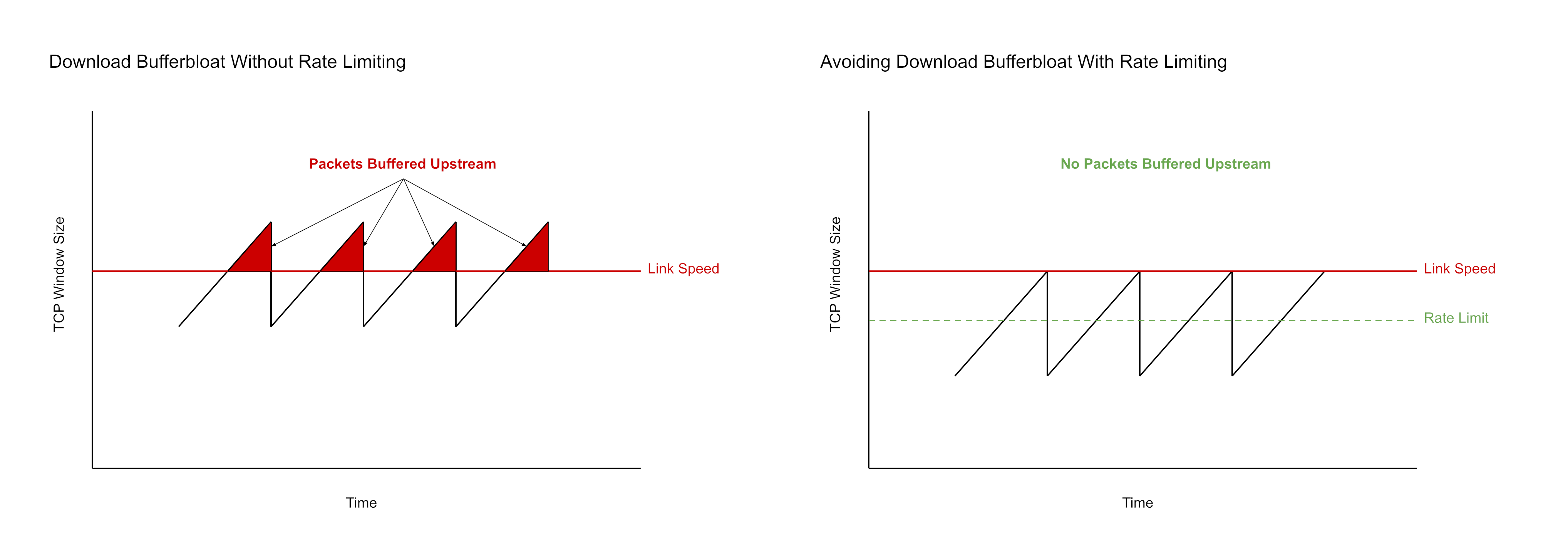

Here is a simplified visualization of the download bufferbloat problem and how rate limiting works around the problem. This example is based on traditional TCP congestion control algorithms such as Reno using additive increase multiplicative decrease. As the window size increases, download bandwidth is exceeded and packets are buffered upstream, causing latency. Using rate limiting to drop downloaded packets early ensures that window size increases above steady state remain below the actual link speed.

Caveats

Ideally, upstream devices would implement active queue management on their transmit queues to us and no download shaping would be necessary on our end.

Download shaping is at best a workaround for well-behaved senders that respond to packet loss by slowing down. Download shaping will have limited value in other scenarios such as floods, and may even be counterproductive if packet loss results in amplification.